MediaPipe overview

MediaPipe provides on-device, real-time ML pipelines for camera-based perception. Typical use cases include pose, hand, and face tracking plus lightweight gesture classification. The key benefit is a consistent landmark representation across frames, which makes downstream mapping (e.g., to interaction parameters) stable and low-latency.

1. Common tracking tasks

- Pose tracking: full-body keypoints for posture and motion features.

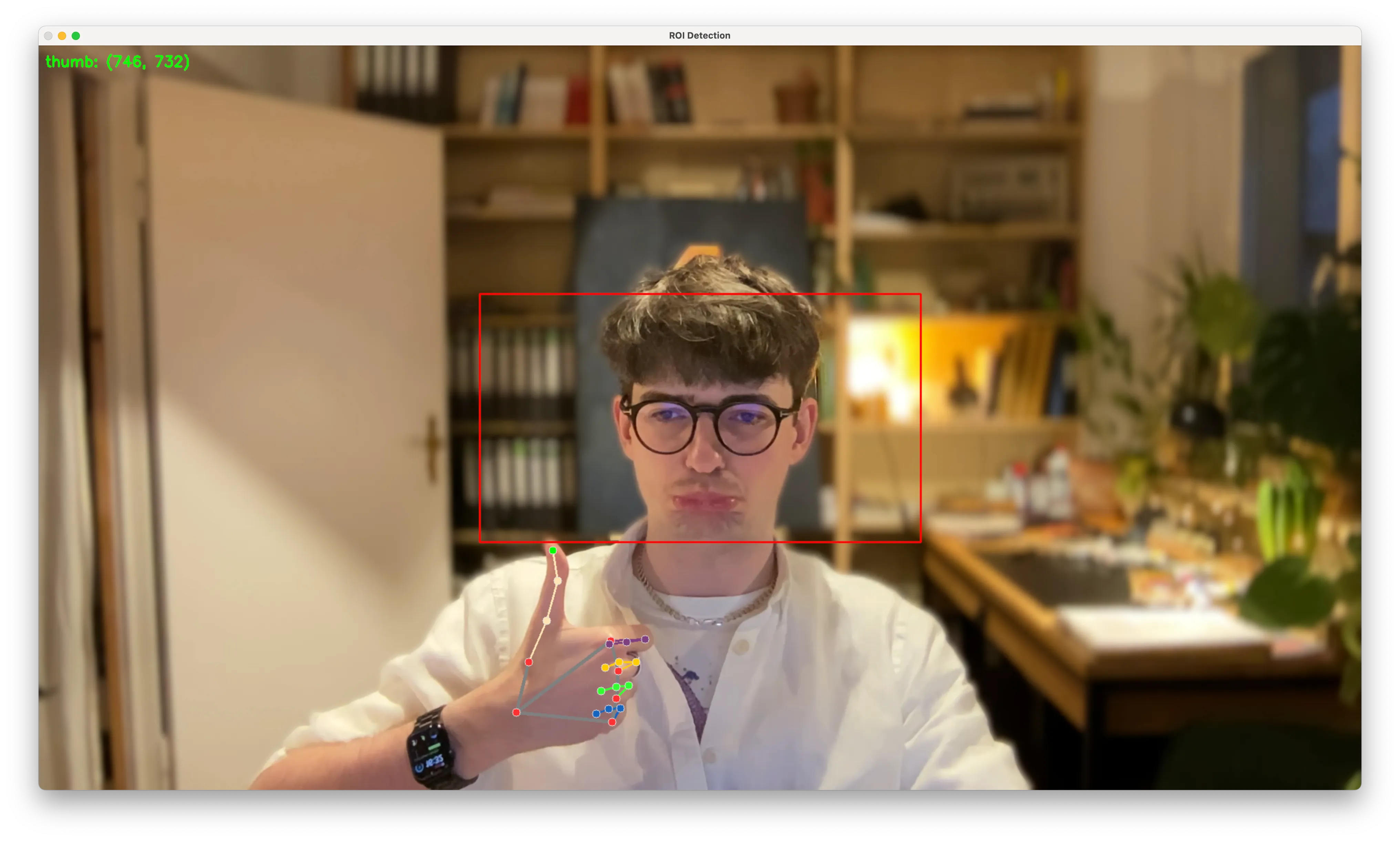

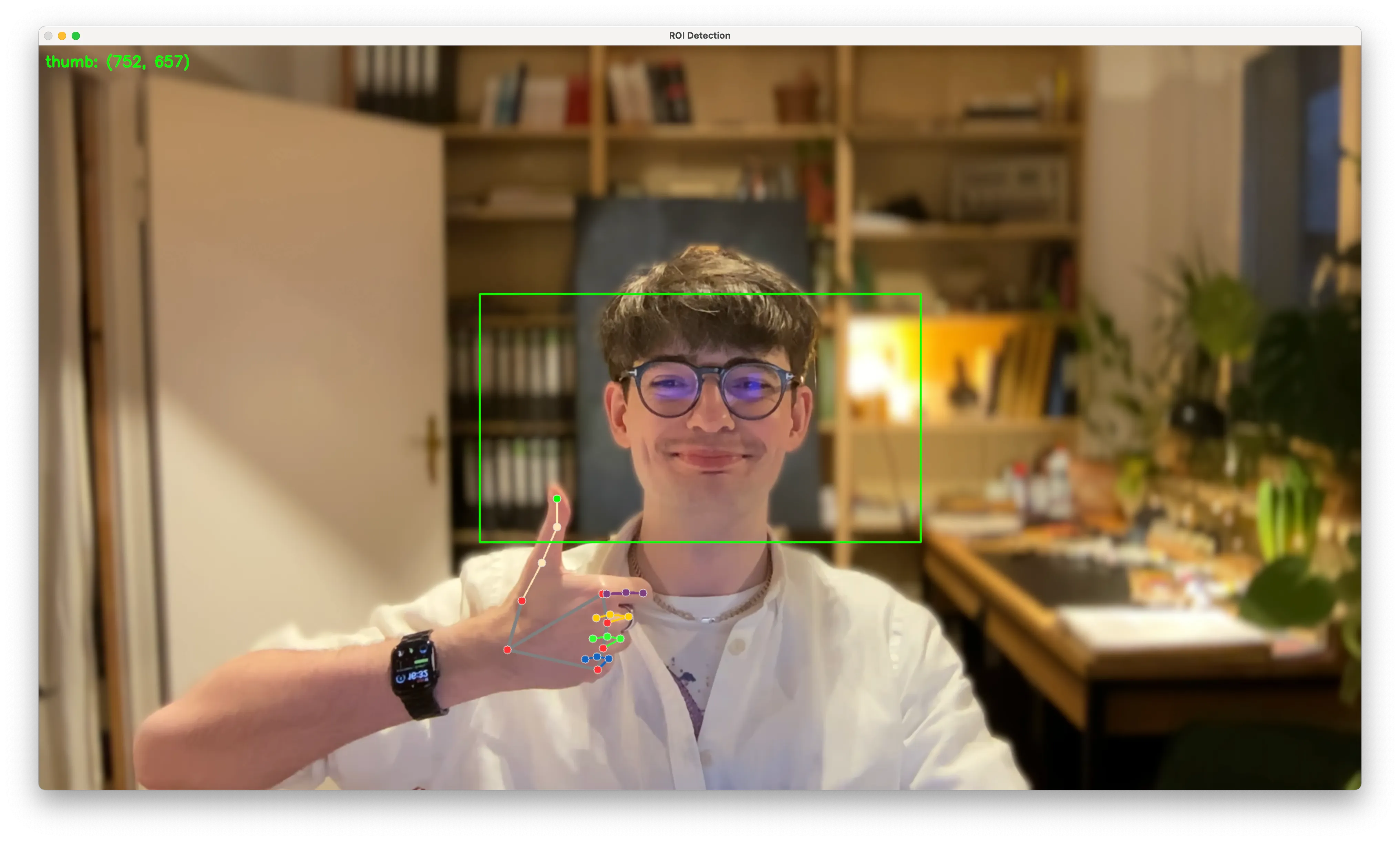

- Hand tracking: multi-joint hand landmarks for gestures and fine motor control.

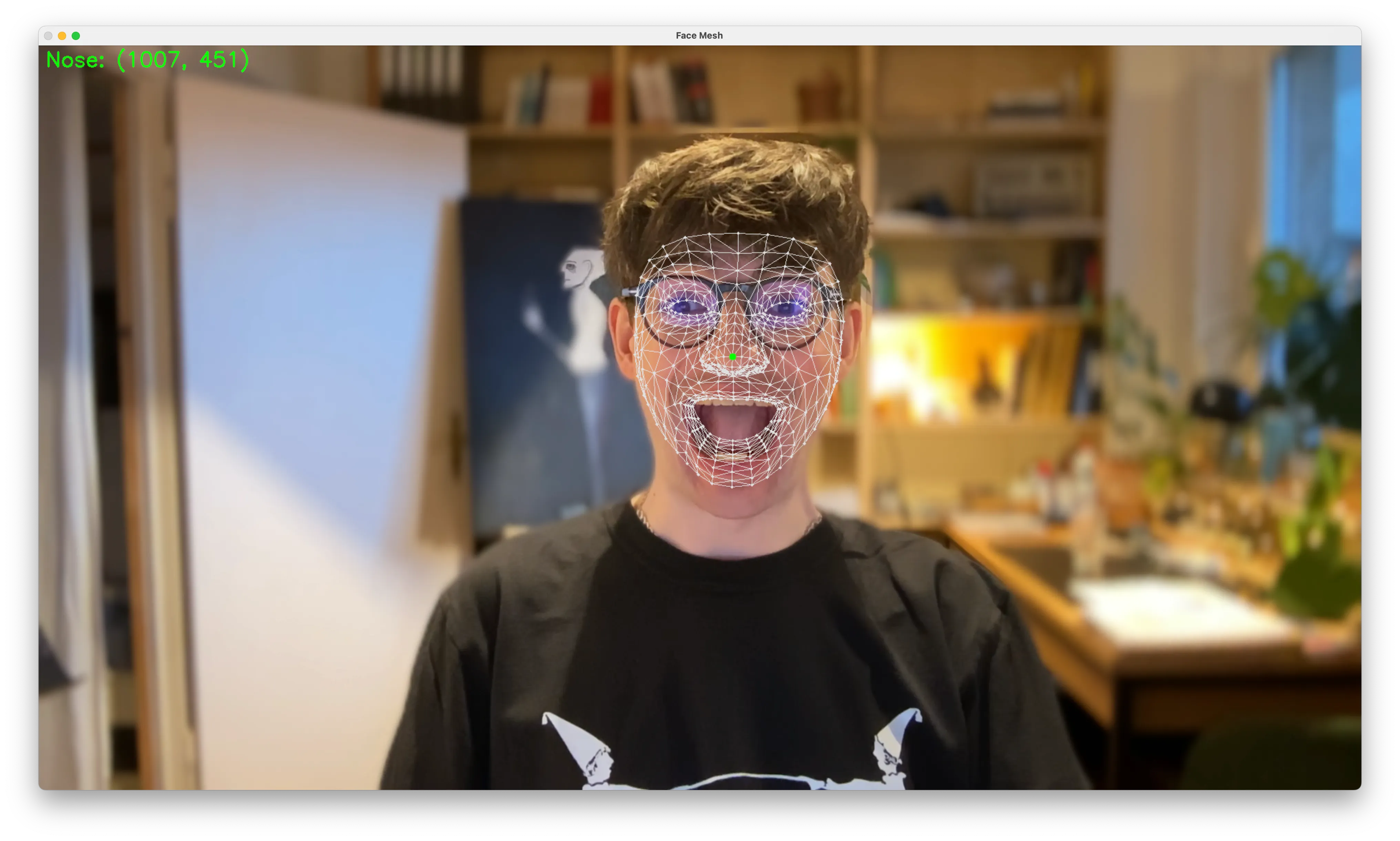

- Face tracking: facial landmarks for expression and head orientation.

2. Region of interest (ROI)

If you only care about a sub-region (e.g., a face or a torso), restricting inference to an ROI reduces compute and increases stability. This can be done by cropping, tracking a previous bounding box, or using a lighter detector to update the ROI over time.